How to make Web Scraping, efficient and automatic

Bringing you an amazing tool which makes web scraping easy to manage, organised and automate.

One of the best and easy way to get data from the required sources are their respective APIs,but most of the times, we don’t get any official API from the source.

and then Scraping comes into the light.

So let’s start with

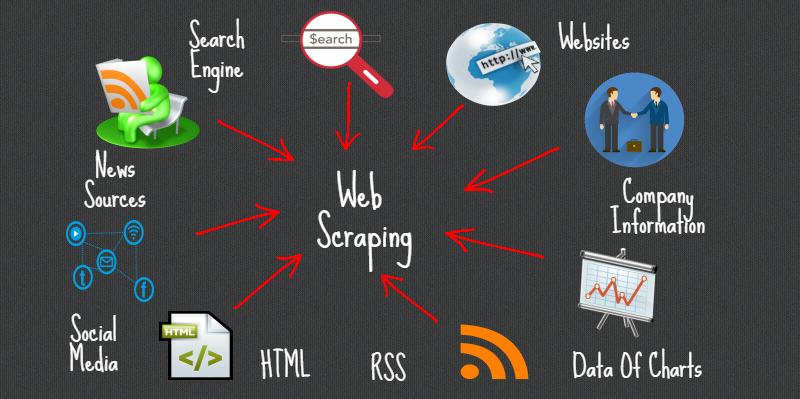

What is web scraping?

Concisely , web scraping stands for extracting required data from web pages.The purpose of scraping is to collect data from different websites and then storing it on your local database.

For example : How Search engines like google crawl websites in order to provide up to date data to the given search.This is done by a piece of code, which is called Scraper.

Why you need scraping for your business?

There are a number of ways to use web scraping in your business if it comes into one of the below listed categories:

a) services that rely on the existing web data to build business.

b) services that use web to augment existing data.

Some of the use cases are :

1. To extract data and save it offline for your business, which will take a lot of time to gather manually.

2. To Optimize your product’s price with competitors.

3. To Stay ahead of the Market.

4. To extract your product’s reviews and get to know your customer base.

5. Collect Data for Market Research.

6. Track Prices from Multiple Markets.

You may find so many existing solutions for scraping but Standalone Scraper won’t be enough.

Scraper helps you to get the data that you want from the Web but monitoring of your scraper is also needed.

There are so many things to look after when you are building a scraper for yourself.

For an efficient scraping tool, you will not only need scraping the desired content but also need a system that reduce manual efforts,applies some intelligence, do continuous monitoring and work with proactive approach. We’ve build such a tool that have all the above features.

What does our scraper tool do?

These are some of the things to look after in a scraper development and setup process :

Tracking of crawling Scripts to get status in the runtime :

The scraper tool keeps the track record of scripts and provides the real-time status. Whether the scraper has just been started or its running or failed or done with the crawling successfully.

Schedule the timing of Scraper :

Every scraper can be scheduled to run in every 5 minutes/hourly/ weekdays/ weekends/ Monthly. Once the timing has been set, system runs the scraper on that time automatically.

Start the scraper whenever needed just with a single click:

When you want to know whether your competitors are offering lower price for the same product you had, you're not going to wait for your scraper to start at its scheduled time and return the price. Not to worries, we've built our tool in a way where you can start the scrapers whenever you want.

Get the result of scraper wherever you want:

You had the crawling scripts at some place and you want their output at some other places. So what you'll do, keep changing the places?

Our tool has the solution to this problem as well, we've built both pull and push API of scraper outputs. so all you need to do is, request data from the pull API or get the result directly at your desired place through push API.

Enable or Disable the Scraper:

The web page your scraper is crawling has changed its design or the web page don't exist anymore or it might have some server issues. In that case, the scraper needs to be stopped and required a fix. The scraper tool has such algorithms, which will disable the scraper if it keeps giving errors and update you about it. You just need to ask to fix the scripts and enable it again yourself.

Export the History of the output:

Our tool will also provide you with the history of output produced by the scraper. This will give you some valuable insights about the data you're extracting from different websites.

Add / Import new web pages for your crawler:

With the Scraper tool, you can add new web pages or import the file of crawling URLs at anytime.

Get over the time response of crawling scripts:

An Informative dashboard has been designed to give you the comprehension of your crawler over the time. for example, How many times it has been executed, How much data it has been produced over the time, How many times it failed, Status of the running crawler, How many inputs have been given to the crawler and many more.

we have a great and expertise team of web scraping and crawling, who have the experience of creating 1000+ crawlers and went through millions of web scraping problems and resolve it successfully.

If you want the demo of our tool or have queries regarding it, please contact us in the comment section. We will get back to you as soon as possible.

Happy Data Crunching

Thank You

Shyam Verma

Full Stack Developer & Founder

Shyam Verma is a seasoned full stack developer and the founder of Ready Bytes Software Labs. With over 13 years of experience in software development, he specializes in building scalable web applications using modern technologies like React, Next.js, Node.js, and cloud platforms. His passion for technology extends beyond coding—he's committed to sharing knowledge through blog posts, mentoring junior developers, and contributing to open-source projects.